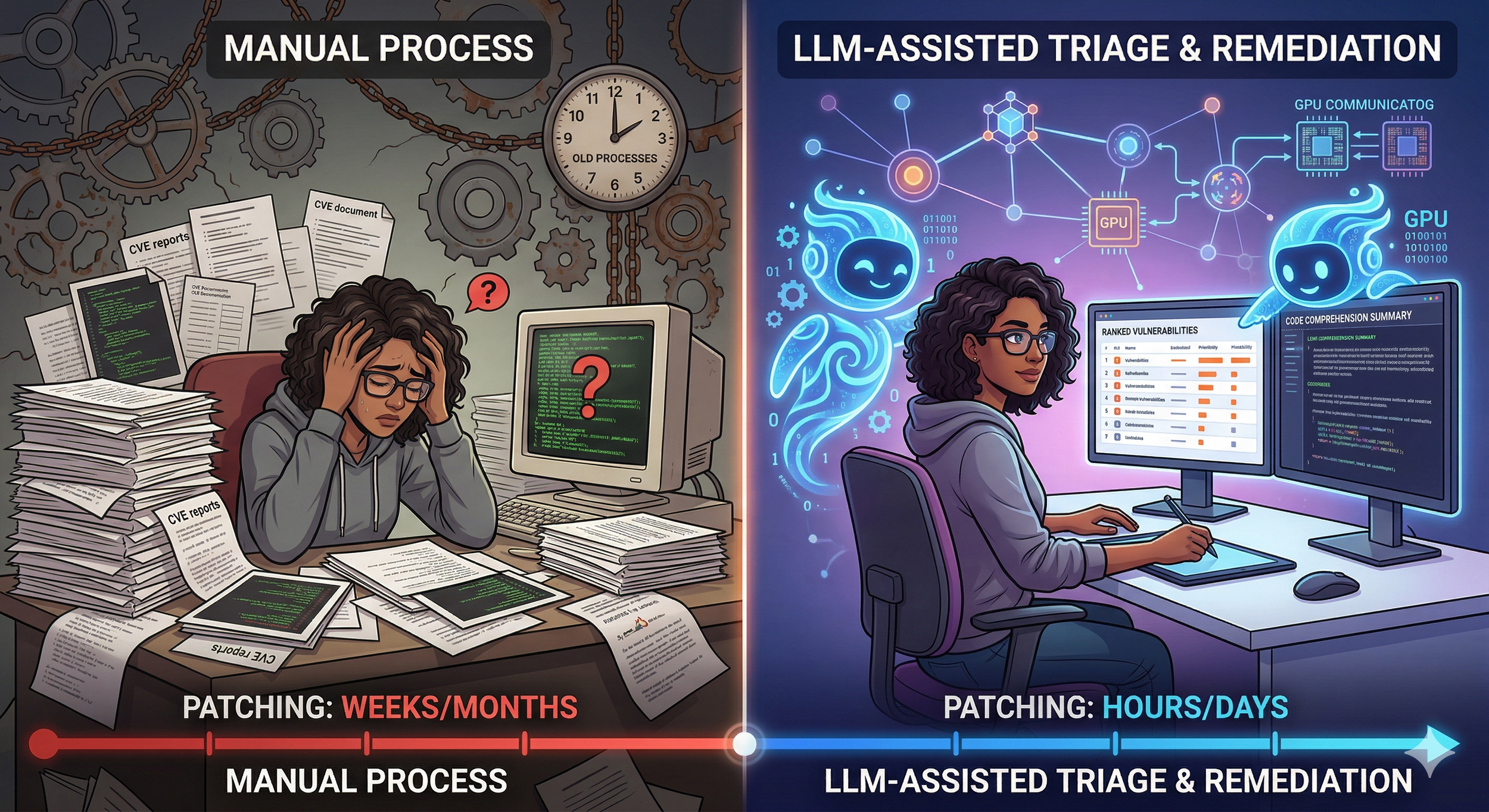

If you are worried about the onslaught of LLM discovered vulnerabilities, the answer isn't to shut down bug bounty programs and batten down the hatches. Your problem lies in the friction of adoption of new LLM tools and processes in your triage, response, and remediation. They can move at LLM speed too, if you unlock AI tools for your teams and promote LLM competency among them.

Patches move slowly because people move slowly. Not from laziness but from the sheer weight of what a patch demands and the slow antiquated processes you haven't updated with LLM tools. A developer gets a CVE alert. She opens a codebase she last touched two years ago. She reads for an hour before she even finds the affected function. She reads for another hour to understand what it does. Then she has to figure out whether the fix will break something three modules away that she has never seen. The vulnerability itself might take ten lines to fix. The understanding takes days, because you haven't armed your teams with enough LLM tools and token budgets.

LLMs compress that reading time. This is not speculation. Code comprehension is the single task where today's models perform most reliably, because the full source is available as context and the question is concrete: what does this function do, what calls it, what data flows into it. A developer who would spend a day tracing call chains can get a working summary in minutes. They still have to verify it. But verification is faster than discovery.

The same compression applies to triage. Most organizations drown in CVE volume. The National Vulnerability Database published over 28,000 entries in 2023. A mid-size company might run 400 distinct software components. Matching those two lists and analyzing which CVEs affect which components, and which of those matches actually matter given how the component is used is tedious. It's context-heavy work that humans do poorly under time pressure. An LLM that can read both the advisory text and the local codebase can produce a first-pass risk ranking in seconds. The security team still makes the call, but they start with a sorted list instead of a pile. The progressive AI forward companies are already doing this, why haven't you started to do so with yours?

Dependency analysis is the third bottleneck where the gain is real. Modern software stacks pull in hundreds of transitive dependencies. When a vulnerability sits in a library four layers down, the fix means upgrading that library, which means checking whether anything in the three layers above it breaks. This is graph traversal plus behavioral reasoning — exactly the kind of task where an LLM can trace the paths and flag the likely failure points. The human still run and analyze the tests. But the LLM tells them which tests to write first.

Testing itself gets faster, though perhaps less dramatically. LLMs can draft regression tests for a known vulnerability, generate edge-case inputs, scaffold the harness, deploy the VMs and test environment. The hard limit is CI pipeline time and environment setup, which can't be shrunk fully with models and agents, though still automated further than most are doing today. But cutting test-authoring time by even half matters when the queue of unpatched vulns is long, LLMs and agents cut it by much much more than half.

Let me share an example of a vulnerability triage analysis I took part in last week. The vulnerability report concerned a theroretical VM escape via GPUs, and normally it might have taken several days to set up the test lab environment, load drivers, dependencies, and write example CUDA code to verify. In this case LLMs identified deployment areas with quotas for the specific machine configurations needed, deployed a test environment and prepared it and driver and stack dependencies in thirty minutes, then automatically deployed ai agents to the VMs. The agents, under my guidance, tested the GPU communciations topologies — and I identified some mistakes the agents made in deployment and harness setup. They redeployed corrections in ten minutes. Then agents wrote enumeration and verification CUDA code which I checked and we iterated four or five times on. The whole process took a few hours to verify how the reported vulnerability was mitigated, not the weeks it would have taken without LLMs. LLMs wrote the vulnerability triage report and it was on its way to the customer executive that was first handed the alarming report to verify — in hours. All at LLM speed.

Now consider the organizational drag. Change review boards exist because organizations rightly fear breaking production. The review takes time because the board members need to understand the change, and the requesting developer writes a poor summary because writing is not her strength and she is busy. An LLM can turn a diff and a commit message into a plain-language impact statement that a non-developer can read in two minutes. This does not eliminate the review. It makes the review fast enough that it stops being a bottleneck.

The objection most developers raise is that LLM output is unreliable, which is more of an issue for code generation, and getting less so. A model that writes a patch from scratch might produce confident mistakes that cost more time to find than the patch would have taken to write by hand. But the argument here is not that LLMs should write patches unsupervised. The argument is that LLMs should read code, summarize risk, trace dependencies, draft tests, and write change descriptions. These are all tasks where the output is verified before use and where a wrong answer still costs less than the status quo of not doing the work at all because nobody has time.

The arithmetic favors this view. An attacker using an LLM to find a new vulnerability needs the model to be right once. A defender using an LLM to triage and patch needs the model to be roughly right most of the time, with a human checking the remainder. The defender's error tolerance is higher, not lower, than the attacker's — provided the human stays in the loop. Strip the human out and the calculus flips. Keep the human in and the defender holds an advantage the attacker lacks: full access to the source, the dependency graph, the test suite, and the deployment history. LLMs amplify the value of that access.

Organizations that hand their developers these tools aimed at comprehension and triage rather than autonomous code generation will patch faster. Not at LLM speed. At human speed, with LLM assistance. The difference between a three-week patch cycle and a three-day patch cycle is the difference between a vulnerability that gets exploited and one that does not. The tools exist now, the bottleneck is adoption and organizational inertia around modern AI tools.PATCHING @AI SPEED

By Dragos Ruiu - April 11 2026